How I built an AI-native design process

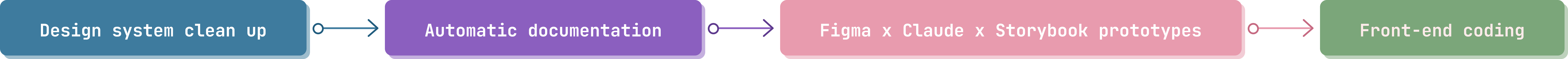

I built an AI-native design process at Pilotly– turning a sole-designer bottleneck into a pipeline where design system cleanup, documentation, prototypes, and front-end code all generate a single source of truth.

keeping pace with engineering

Engineering at Pilotly had roughly 3x’d their output by leaning into AI tooling. Our design-to-engineering pipeline carried structural debt: documentation lagged the product, handoff was static annotated screens, and the codebase wasn’t built around a design system.

In Q1 2026, we set a design OKR:

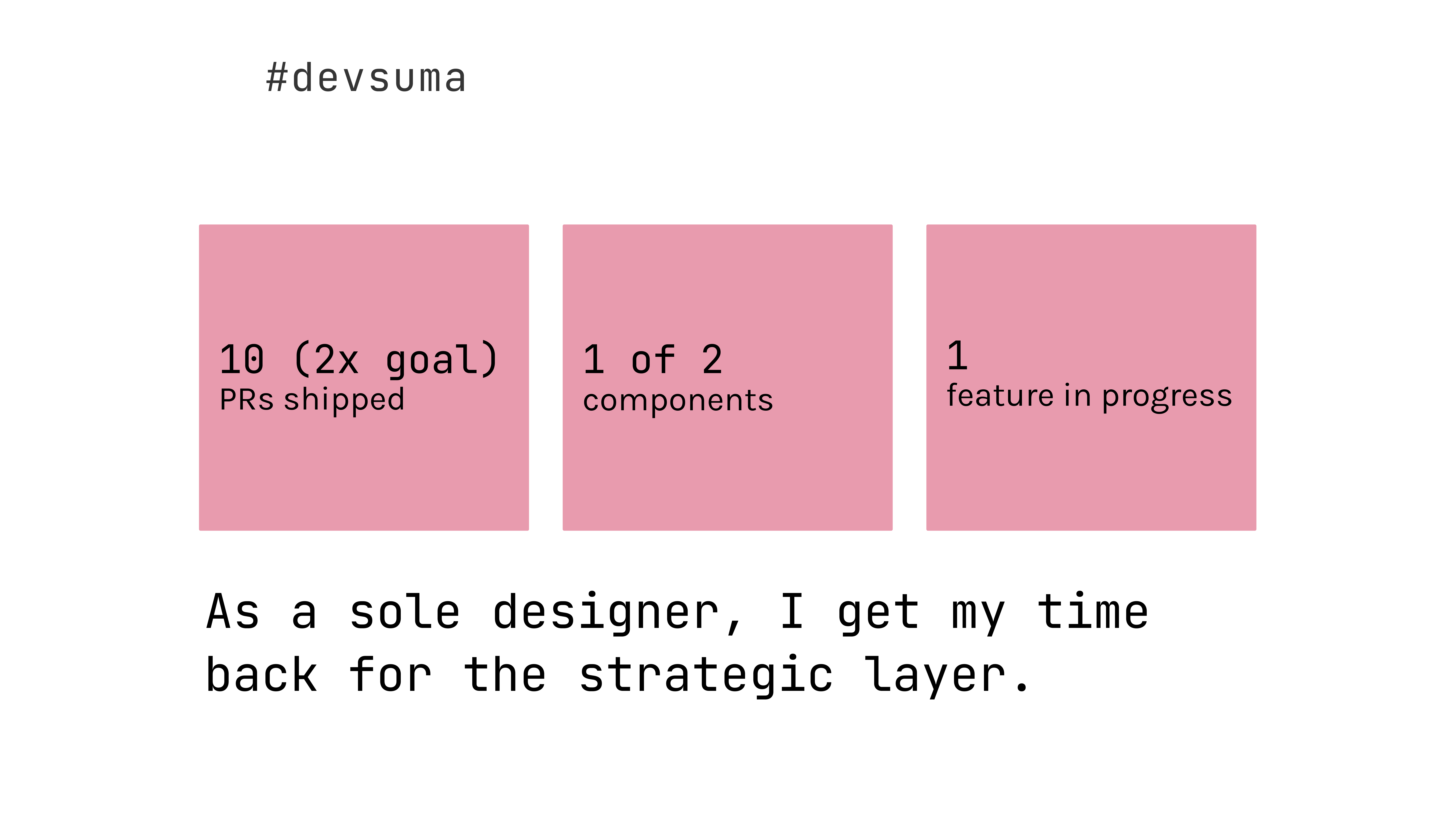

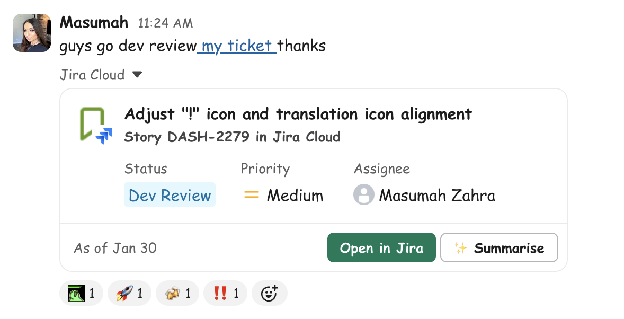

becoming #devsuma

In late 2025, my VP of Product and Engineering and I started talking about whether I could get into Claude and start coding. The thought was practical: if I could fix small visual polish myself, engineers could focus on architecture and product.

I set three key results: ship five design-authored UI improvement PRs, bring two core UI components into the codebase, and deliver a feature.

Each phase opened the door to the next, often because Claude or Figma’s MCP shipped new capabilities mid-quarter.

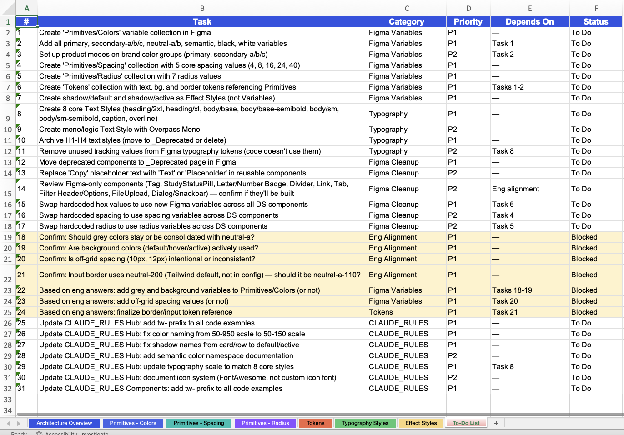

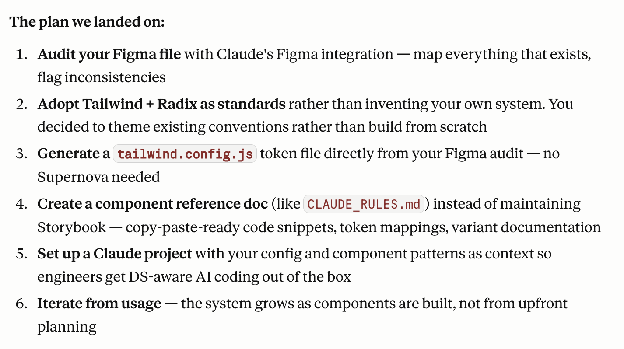

cleaning up the design system

I had Claude cross-reference every Figma component against actual usage in our codebase. Orphaned components got pruned, misplaced ones got rehomed. I unified design tokens into a single tokens.json so the system and the codebase finally spoke the same language. Without this foundation, none of the later phases would have worked.

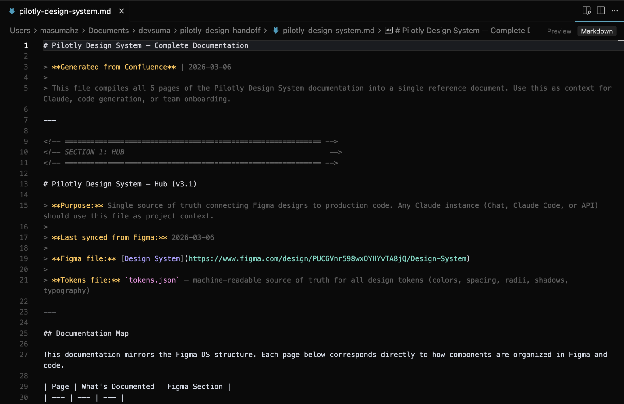

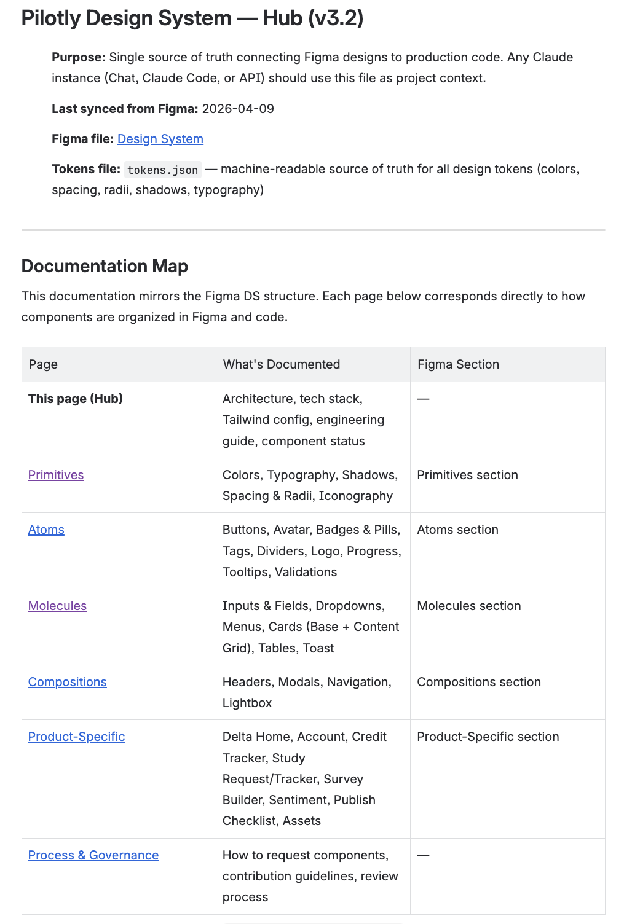

documentation that refreshes itself

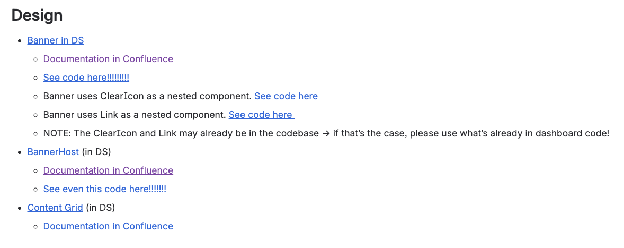

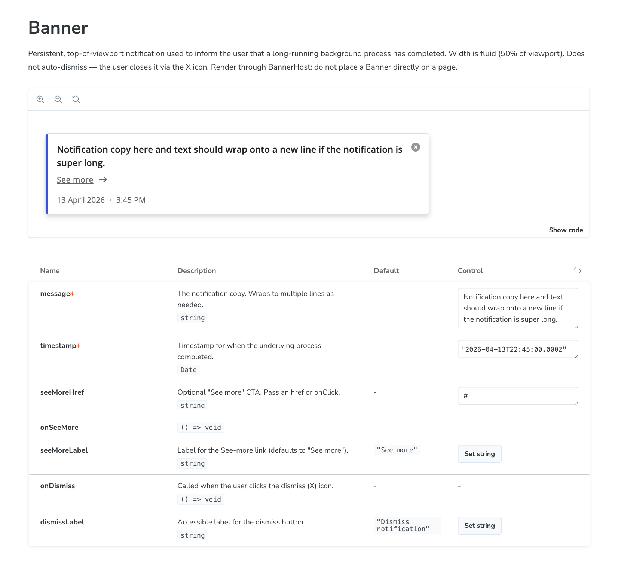

With the system clean, Claude generated component and token documentation, pushed to Confluence. Documentation used to live in three places (Figma, scattered docs, my head). Now it regenerates from the actual system in minutes.

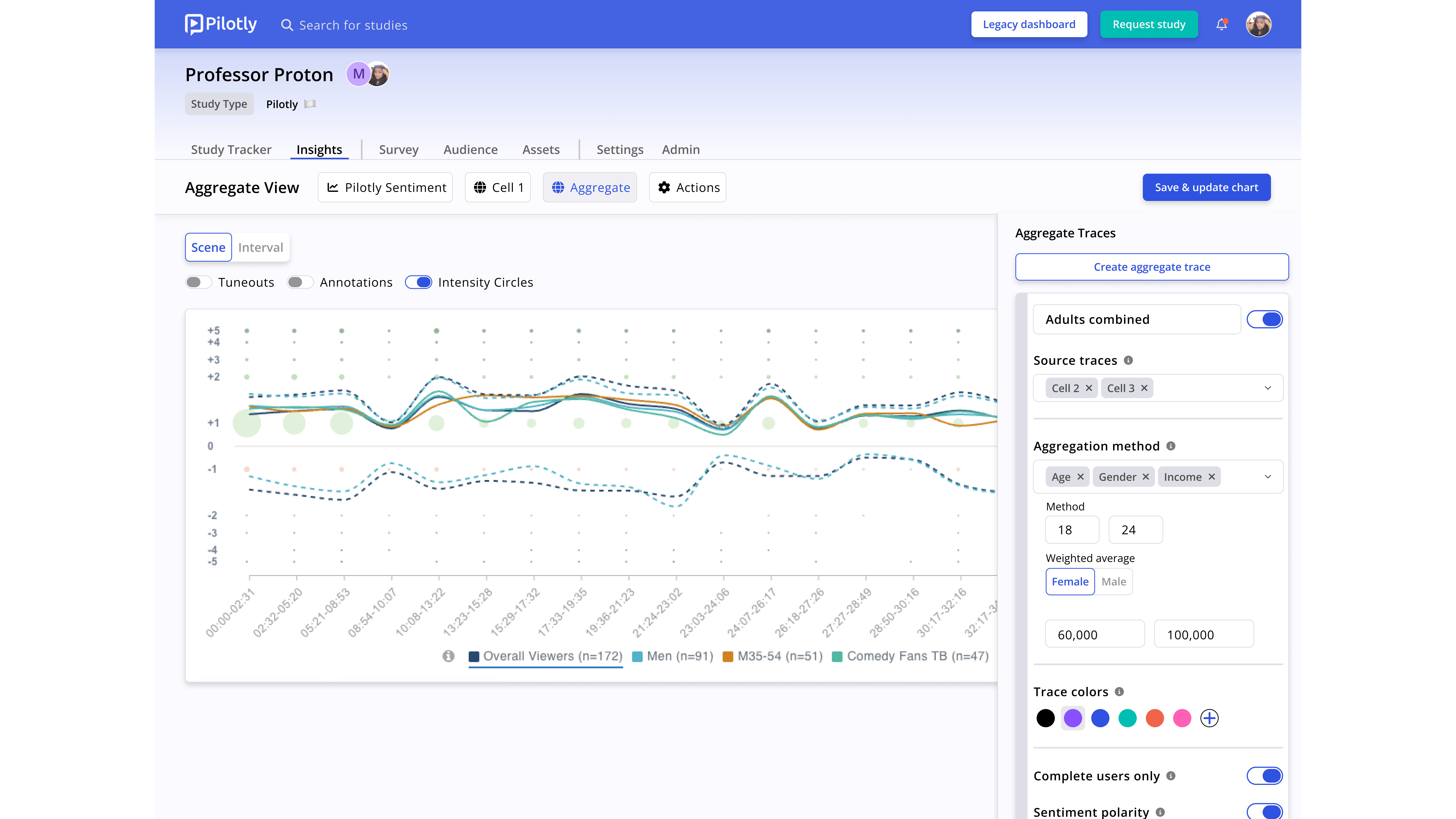

click-through design reviews

Using the Figma MCP, Claude reads hi-fi designs and produces interactive Storybook prototypes. New feature scoping is now reviewed on a prototype, not on static screens.

generating UX flows and code

Mid-quarter, Claude and Figma updated their MCP so Claude could create designs, not just read them. The lesson, mid-experiment: working from documented components produces dramatically better output than generating from a vague prompt. The cleaner the context, the better the output.

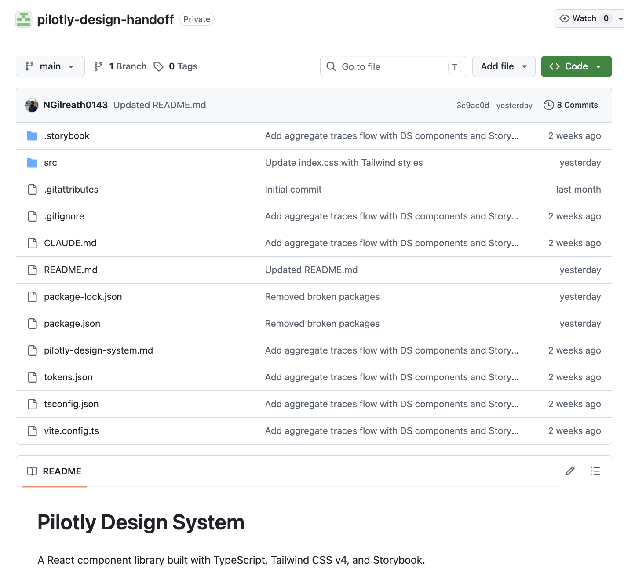

the handoff repo

When an engineer picks up a ticket now, they pull from a repo and find the PRD, static designs, Confluence docs, and a working code starter — all generated from a system that’s already in sync.

This repo is the proof point for taking on larger feature work using this process. It’s also where the handoff errors show up less. Engineers used to flag missing states, ambiguous spacing, or unclear interaction logic, and we’d cycle. Now most of that gets caught before the ticket is opened.

design x engineering

Our engineers use Claude in their normal work too, and they’ve started reporting that the code their Claude produces is noticeably better when the shared context is right there. The pipeline is amplifying engineering output without anyone changing how they work.

a designer building a feature

The pillar feature engineering didn’t have bandwidth for this quarter. My PM and I are coding it ourselves through the AI-native pipeline.

What I’m testing here is whether the pipeline scales from a single component to a full feature. My hypothesis: a combination of a PRD, documented Confluence components, and the tokens.json file should help give Claude enough context to write production-ready code.

We’re still in progress. It might end with us shipping it, it might end with engineering pulling it across the finish line. Either outcome will teach us whether non-engineers can ship a pillar feature through this pipeline.

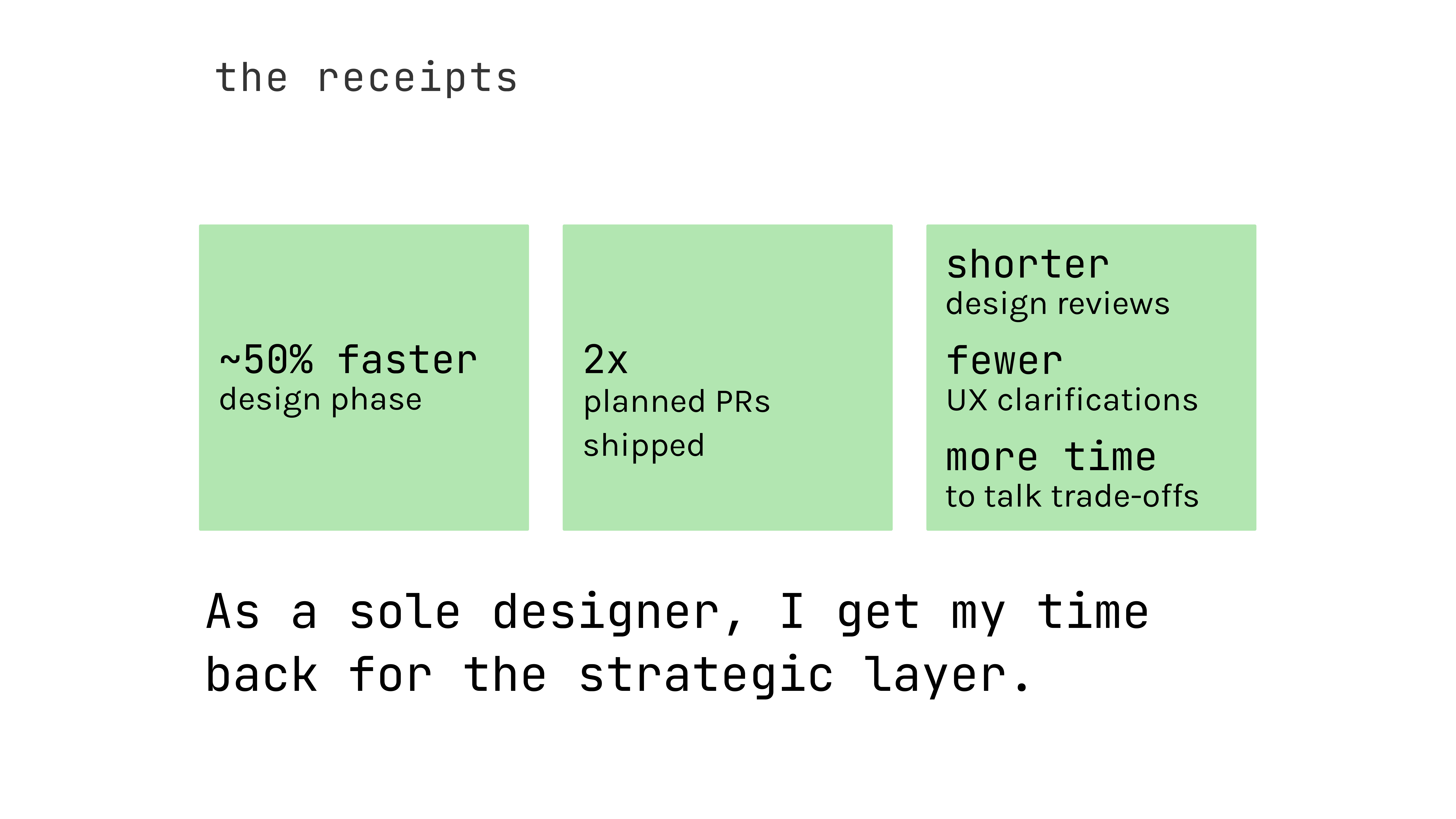

AI’s impacts

what i learned

AI didn’t replace any phase of design — it compressed the mechanical layer so I could spend more time in the strategic one.

Stable foundations make AI useful. Without Phase 1, none of this would have worked.

None of this came at the expense of quality.

where i see this going

Where this is headed: a designer or PM brings a DRD or PRD. Claude returns multiple UX flow options in Figma (or now, in Claude Design!), drawn from documented components and patterns. Design will refine. Code will generate. Engineering picks up tickets with something that already speaks the codebase’s language.

AI is the foundational layer of how we work.

I’ll write the next version of this piece when Build Audience ships.